MLOps Explained

A Complete Introduction

MLOps Definition

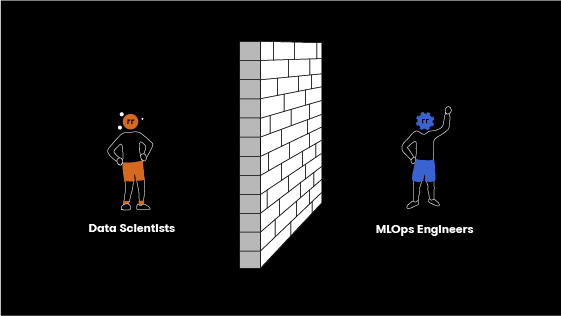

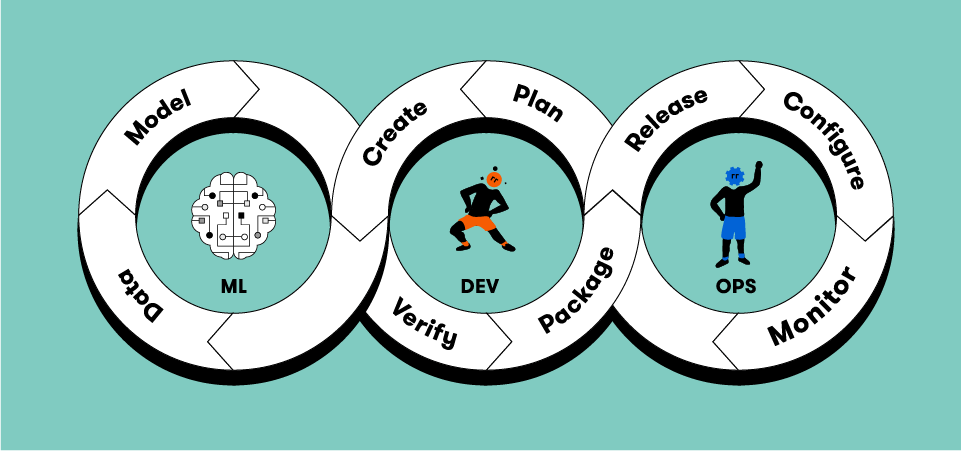

MLOps—the term itself derived from machine learning or ML and operations or Ops—is a set of management practices for the deep learning or production ML lifecycle. These include practices from ML and DevOps alongside data engineering processes designed to efficiently and reliably deploy ML models in production and maintain them. To effectively achieve machine learning model lifecycle management, MLOps fosters communication and collaboration between operations professionals and data scientists.

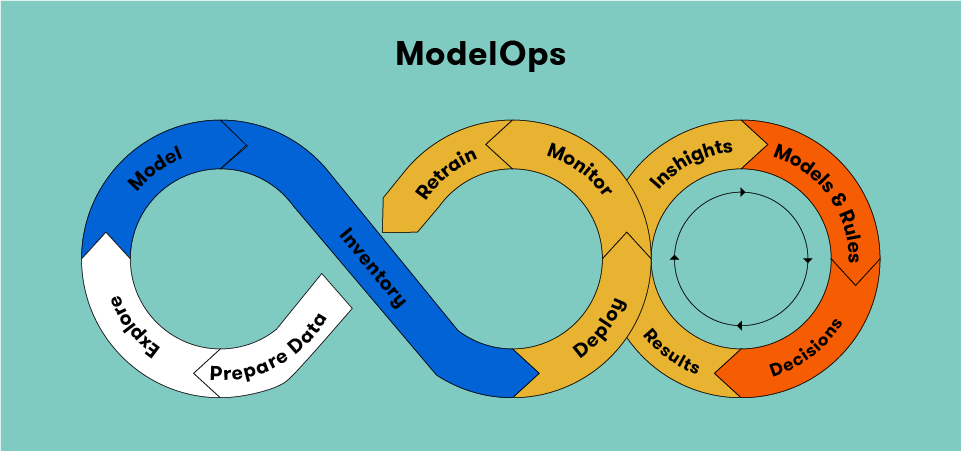

Because MLOps focuses on the operationalization of ML models, it is a subset of ModelOps. ModelOps spans the operationalization of AI models of all kinds, including ML models.

Machine learning operations or MLOps focus on improving the quality of production ML and increasing automation while maintaining attention to regulatory and business requirements. This balanced focus is similar to a DataOps or DevOps approach.

However, while the DataOps or DevOps approaches are sophisticated sets of best practices and MLOps began in a similar way, MLOps is experiencing evolutionary change. MLOps now encompasses the entire ML lifecycle, including: the software development lifecycle, and integration with model generation including continuous integration and delivery; deployment; orchestration; governance; monitoring of health and diagnostics; and analysis of business metrics.

The result is an independent approach to ML lifecycle management and an ML engineering culture that deploys DevOps best practices for an ML environment, unifying ML system development and operations to arrive at MLOps. Ongoing advocacy for monitoring and automation at all steps of ML system construction, including testing, integration, deployment, release, and management of infrastructure, is central to practicing MLOps machine learning operations.

FAQs

What is MLOps?

The practices and technology of Machine Learning Operations (MLOps) offer a managed, scalable means to deploy and monitor machine learning models within production environments. MLOps best practices allow businesses to successfully run AI.

MLOps is modeled on DevOps, the existing practice of more efficiently writing, deploying, and managing enterprise applications. DevOps began as a way to unite software developers (the Devs) and IT operations teams (the Ops), destroying data silos and enabling better collaboration.

MLOps shares these aims but adds data scientists and ML engineers to the team. Data scientists curate datasets and analyze them by creating AI models for them. ML engineers are the people who use automated, disciplined processes to run the datasets through the models.

MLOps, then, is that deeply collaborative communication between the ML component of the team—the data scientists—and Ops, which is the portions of the team focused on production or operations. ML operations intend to automate as much as possible, eliminate waste, and produce deeper, more consistent insights using machine learning.

Huge amounts of data and machine learning can offer tremendous insights to a business. However, without some form of systemization, ML can lose focus on business interest and devolve into a scientific endeavor.

MLOps provides that clear direction and focus on organizational interest for data scientists with measurable benchmarks.

Some of the critical capabilities of MLOps that enable machine learning in production include:

Simplified deployment. Data scientists may use many different modeling frameworks, languages, and tools, which can complicate the deployment process. MLOps enables IT operations teams in production environments to more rapidly deploy models from various frameworks and languages.

ML monitoring. Software monitoring tools do not work for machine learning monitoring. In contrast, the monitoring that MLOps enables is designed for machine learning, providing model-specific metrics, detection of data drift for important features, and other core functionality.

Life cycle management. Deployment is merely the first step in a lengthy update lifecycle. To maintain a working ML model, the team must test the model and its updates without disrupting business applications; this is also the realm of MLOps.

Compliance. MLOps offers traceability, access control, and audit trails to minimize risk, prevent unwanted changes, and ensure regulatory compliance.

Why MLOps is Important

Managing models in production is challenging. To optimize the value of machine learning, machine learning models must improve efficiency in business applications or support efforts to make better decisions as they run in production. MLOps practices and technology enable businesses to deploy, manage, monitor, and govern ML. MLOps companies assist businesses in achieving better performance from their models and reaching ML automation more rapidly.

Important data science practices are evolving to include more model management and operations functions, ensuring that models do not negatively impact business by producing erroneous results. Retraining models with updating data sets now includes automating that process; recognizing model drift and alerting when it becomes significant is equally vital. Maintaining the underlying technology, MLOps platforms, and improving performance by recognizing when models demand upgrades are also core to model performance.

This doesn’t mean that the work of data scientists is changing. It means that machine learning operations practices are eliminating data silos and broadening the team. In this way, it is enabling data scientists to focus on building and deploying models rather than making business decisions, and empowering MLOps engineers to manage ML that is already in production.

Benefits of MLOps

MLOps can assist organizations in many ways:

Scaling. MLOps is critical to scaling an organization’s number of machine learning-driven applications.

Trust. MLOps also builds trust for managing machine learning in dynamic environments by creating a repeatable process through automation, testing, and validation. MLOps enhances the reliability, credibility, and productivity of ML development.

Seamless integration, improved communication. Similar to DevOps, MLOps follows a pattern of practices that aim to integrate the development cycle and the operations process seamlessly. Typically, the data science team has a deep understanding of the data, while the operations team holds the business acumen. MLOps enhances ML efficiency by combining the expertise of each team, leveraging both skill sets. The enhanced collaboration and more appropriate division of expertise for data and operations teams established by MLOps reduces the bottleneck produced by non-intuitive, complex algorithms. MLOps systems create adaptable, dynamic machine learning production pipelines that flex to accommodate KPI-driven models.

Better use of data. MLOps can also radically change how businesses manage and capitalize on big data. By improving products with each iteration, MLOps shortens production life cycles, driving reliable insights that can be used more rapidly. MLOps also enables more focused feedback by helping to decipher what is just noise and which anomalies demand attention.

Compliance. The regulatory and compliance piece of operations is an increasingly important function, particularly as ML becomes more common. Regulations such as the Algorithmic Accountability Bill in New York City and the GDPR in the EU highlight the increasing stringency of machine learning regulations. MLOps keeps your team at the forefront of best practices and evolving law. MLOps systems can reproduce models in accordance and compliance with original standards to ensure your system stays in compliance even as consequent models and machine learning pipelines evolve. Your data team can focus on creating and deploying models knowing the operations team has ownership of regulatory processes.

Reduced risk and bias. Business risk via undermined or lost consumer trust can be the result of unreliable, inaccurate models. Unfortunately, training data and the volatile, complex data of real world conditions may be drastically different, leading models to make poor quality predictions. This renders them liabilities rather than assets; MLOps reduces this risk. Additionally, MLOps can help prevent some development biases—including some that can lead to missed opportunities, underrepresented audiences, or legal risk.

Many, if not most, current machine learning deployment processes are complex, manual, and cross-disciplinary, touching business, data science, and IT. This makes quick detection and resolution of model performance problems a challenge.

The goal of MLOps is to deploy the model and achieve ML model lifecycle management holistically across the enterprise, reducing technical friction, and moving into production with as little risk and as rapidly to market as possible. A model has no ROI until it is running in production.

MLOps Best Practices

There are several MLOps best practices that help organizations achieve MLOps goals. MLOps systems should be collaborative; continuous; reproducible; and tested and monitored.

Collaborative: Hybrid Teams

As mentioned above, bringing an ML model into production demands a skill set that was, in the past, the provenance of several different teams that were siloed and separate. A successful MLOps system requires a hybrid team that, as a group, covers that broad range of skills.

A successful team typically includes an MLOps engineer if possible, a data scientist or ML engineer, a data engineer, and a DevOps engineer. The key issue is that a data scientist working solo cannot accomplish a full set of MLOps goals; while the precise titles and organization of an MLOps team will vary, it does take a hybrid, collaborative team.

While a data scientist may use R or Python to develop ML models without any business operations input, without a unified case, it can become messy and time-consuming to put that model into production. MLOps ensures that every step is fully audited and collaboration starts on day one. Organizational transparency that includes company-wide visibility and permissions ensure that each team member is aware of even very granular details, empowering the more strategic deployment of ML models.

Continuous: Machine Learning Pipelines

The data pipeline, a sequence of actions that the system applies to data between its destination and source, is among the core concepts of data engineering. Data pipelines, sometimes referred to as MLOps pipelines, are usually defined in graph form, in which each edge represents an execution order or dependency and each node is an action. Also referred to as extract, transform and load (ETL) pipelines, many specialized tools exist for creating, managing, and running data pipelines.

Because ML models always demand data transformation in some form, they can be difficult to run and manage reliably. Using proper data pipelines offers many benefits in machine learning operations management, run time visibility, code reuse, and scalability. ML is itself a form of data transformation, so by including steps specific to ML in the data pipeline, it becomes an ML pipeline.

Independent from specific data instances, the ML pipeline enables tracking versions in source control and automating deployment via a regular CI/CD pipeline. This allows for the automated, structured connection of data planes and code.

Most machine learning models require two versions of the ML pipeline: the training pipeline and the serving pipeline. This is because although they perform data transformations that must produce data in the same format, their implementations vary significantly. Particularly for models that eschew batch prediction runs for serving in real-time requests, the means of access and data formats are very different.

For example, typically, the training pipeline runs across batch files that include all features. In contrast, the serving pipeline often receives only part of the features and runs online, retrieving the remainder from a database.

However different the two pipelines are, it is critical to ensure that they remain consistent. To do this, teams should attempt to reuse data and code whenever possible.

Reproducible: Model and Data Versioning

Consistent version tracking is essential to achieving reproducibility. A world created by traditional software defines all behavior with versioning code, and tracking that is sufficient. In ML, additional information must be tracked, including model versions, the data used to train each one, and certain meta-information such as training hyperparameters.

Standard version control systems are often incapable of tracking data practically and efficiently because it may be too mutable and massive, even though they can track models and metadata. Because model training frequently happens on a different schedule, it’s also important to avoid linking the code and model lifecycles. It’s also essential to version data and connect every trained model to their precise versions of data, code, and hyperparameters.

ML models are typically designed to be unique, because there is no “one-size-fits-all” model for all businesses and all data. Even so, without some kind of MLOps framework or tooling, it can be impossible to construct a model used in the past by a single enterprise to a similar degree of accuracy. This kind of ML project demands an audit trail of the previous model’s dataset, and the version of the code, the framework, libraries, packages, and parameters.

A key part of a MLOps lifecycle, these attributes ensure reproducibility—the difference between an interesting experiment and a reliable process. This has lead to the emerging concept of ‘data as code’ – namely the ability to treat data models in MLOps just the same way that containerized code is treated in DevOps – versioned, reproducible and portable data models that can be shared between teams, departments or even organizations.

Tested and Monitored: Validation

Test automation is another core DevOps best practice that typically takes the form of integration testing and unit testing. Before deploying a new version, passing these tests is a prerequisite. Automated, comprehensive tests can dramatically accelerate the speed of production deployments, boosting confidence for the team.

Because no model produces results that are 100% correct, it is more difficult to test ML models. This eliminates the need for a binary pass/fail status and introduces the need for statistical model validation tests. This, in turn, means that the team must select the threshold of their acceptable values and the right metrics to track. This is usually done using empirical evidence, as well as by comparing the current model with previous benchmarks or models. All of these steps precede the determination that the ML model is ready for deployment.

Validating input data is the first step toward a strong data pipeline. Common validations necessary for ML training and prediction include column types, file format and size, invalid values, and null or empty values.

Tracking a single metric throughout the validation set’s entirety is insufficient. Model validation must be conducted individually for relevant slices or segments of the data, similar to the way that several cases must be tested in a solid unit test. For example, it is important to track separate metrics for male, female and other genders if gender might be one of a model’s relevant features, either directly or indirectly. Failing to validate in this way could lead to under-performing in important segments or fairness/bias issues.

ML pipelines should also validate the input’s higher level statistical qualities in addition to simpler validations performed by any data pipeline. For example, it will likely affect the trained model and its predictions if the standard deviation of a feature changes considerably between training datasets. This might reflect actual changes in the data, but it may also be the result of a data processing anomaly, so identifying and ruling out systematic errors which could hurt the model and repairing them is important.

Monitoring production systems is critical to good performance, and even more so for ML systems. This is because the performance of ML systems relies both on factors that users can mostly control, such as software and infrastructure, and also on data, which we can control to far less of an extent. Therefore, it is important to monitor model prediction performance as well as standard metrics such as errors, latency, saturation, and traffic.

Since models work on new data, monitoring their performance presents an obvious challenge. Typically, there is no verified label for comparison with the model’s results. Certain situations present indirect means for assessing the effectiveness of the model; for example, a recommendation model’s performance might be indirectly assessed by measuring click rate. However, in many cases, statistical comparisons may be the only way to make these assessments, such as by calculating a percentage of positive classifications for a set period of time and alerting for any significant deviations during the time period.

To be able to successfully identify problems that affect specific segments, it’s important to monitor not just globally, but to monitor metrics across slices.

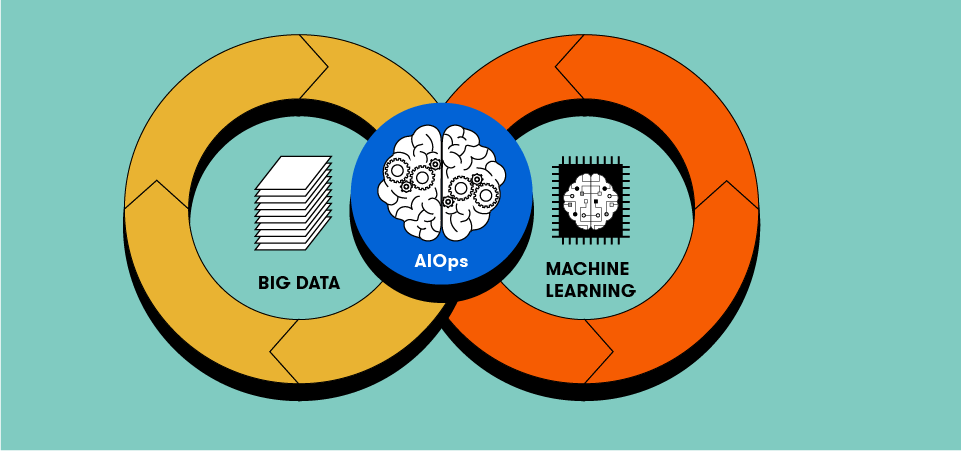

MLOps vs AIOps

Artificial Intelligence for IT Operations, or AIOps, is a multi-layered technology that enhances IT operations and enables machines to solve IT problems without human assistance. AIOps uses analytics and machine learning to analyze big data, and detect and respond to IT issues in real-time, automatically. For core technology functions in big data and machine learning, AIOps works as continuous integration and deployment. IT operations analytics, or ITOA, is a part of AIOps that examines AIOps data to improve IT practices.

In addition to taking on complex IT challenges, AIOps enables organizations to handle exponential data growth. Effective AIOps allows machines to independently correlate data points by creating an accurate inventory and automates the operations process holistically across hybrid environments. It is used to reduce noise, applied to machine learning to detect anomalies and patterns. Teams also use AIOps to manage dependencies and testing, and to achieve system mapping and greater observability.

Compared to MLOps, AIOps is a narrower practice that automates IT functions using machine learning. The goal of MLOps is to bridge the gap between operation teams and data scientists, and consequently between the execution and development of ML models. In contrast, the focus of AIOps is smart analysis of root causes and automated management of IT incidents.

MLOps vs DevOps

DevOps is a set of practices in the traditional software development world that enables faster, more reliable software development into production. DevOps relies on automation, tools, and workflows to abstract accidental complexity away and allow developers to focus on more critical issues.

The reason that DevOps is not simply applied to ML is that ML is not merely code, but code and data. A data scientist creates an ML model that is eventually placed in production by applying an algorithm to training data. This huge amount of data affects the model’s behavior in production. The behavior of the model also hinges on the input data that it receives at the time of prediction—and this cannot be known beforehand. However, technologies such as Kubeflow allow the portability, versioning and reproduction of ML models, enabling similar behaviors and benefits as found in DevOps. This is called ‘data as code’.

DevOps achieves benefits such as increasing deployment speed, shortening development cycles, and increasing the dependability of releases by introducing two concepts during development of the software system:

Continuous Integration (CI)

Continuous Delivery (CD)

ML systems are similar to other software systems in many ways, but there are a few important differences:

CI includes testing and validating data, data schemas, and models, rather than being limited to testing and validating code and components.

CD refers to an ML training pipeline, a system that should deploy a model prediction service automatically, rather than a single software package or service.

In addition, ML systems feature continuous testing (CT), a property that deals with retraining and serving models automatically to ensure that the team can better assess business risks using immediate feedback throughout the process.

ML systems differ from other software systems in several other ways, further distinguishing DevOps and MLOps.

Teams. The team working on an ML project typically includes data scientists who focus on model development, exploratory data analysis, research, and experimentation. In contrast to team members on the DevOps side, these team members might not be capable of building production-class services as experienced software engineers are.

Development. To rapidly identify what best addresses the problem, ML is by necessity experimental. Team members test and tweak various algorithms, features, modeling techniques, and parameter configurations in this vein, but this creates challenges. Maximizing the reusability of code and maintaining reproducibility while tracking which changes worked and which failed are chief among them.

Testing. Compared to other software systems, testing an ML system is much more involved. Both kinds of systems require typical integration and unit tests, but ML systems also demand model validation, data validation, and quality evaluation of the trained model.

Deployment. ML models trained offline are not simply deployed in ML systems in real-world conditions for reliable predictions and results. Many situations demand a multi-step pipeline to automatically retrain the model before deployment. To create and deploy this kind of machine learning project pipeline, it is necessary to automate ML steps that data scientists complete manually before deployment as they validate and train the new models—and this is very complex.

Production. ML models are subject to more sources of decay than are conventional software systems, such as data profiles that are constantly changing and suboptimal coding, and it is essential to consider this degradation and reduced performance. Tracking summary data statistics and monitoring online model performance is critical, and the system should be set to catch values that deviate from expectations and either send notifications or roll back when they occur.

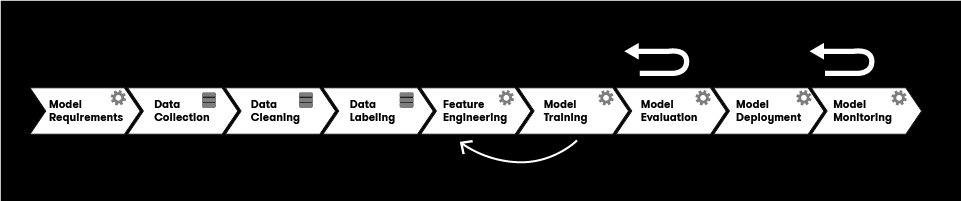

How to Deploy ML Models Into Production

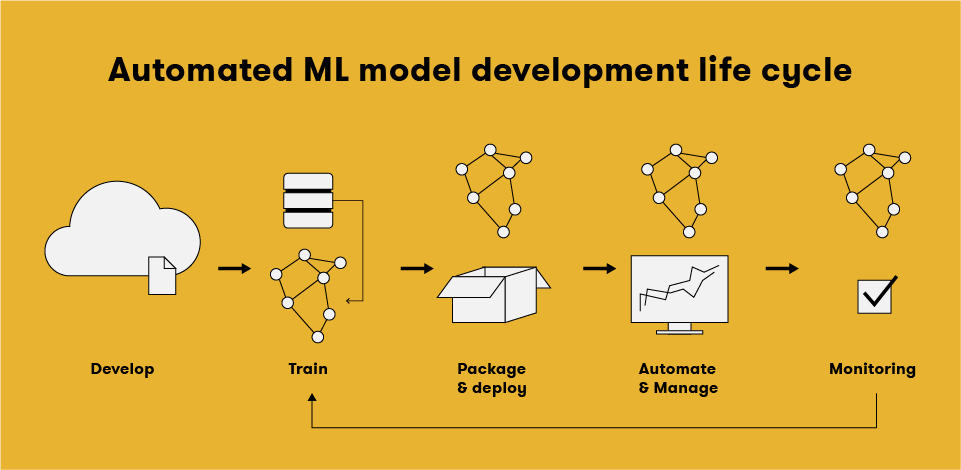

In any ML project, the process of delivering an ML model to production involves the following steps. These machine learning model lifecycle management steps can be completed manually or can be completed by an automatic pipeline.

Foundation. The team, including management and all stakeholders, defines the business use case for the data and establishes the success criteria for measuring model performance.

Data extraction. The data scientists on the team select relevant data from a range of sources and integrate it for the ML task.

Data analysis. Team data scientists perform exploratory data analysis (EDA) to analyze the data available for creating the ML model. The data analysis process allows the team to understand the characteristics and data schema the model will expect. It also enables the team to identify which feature engineering and data preparation the model needs.

Data preparation. The team prepares the data for the ML task, producing the data splits in their prepared format as the output. Data preparation involves applying feature engineering and data transformation to the model, and conducting data cleaning, in which the data scientist divides the data into sets for validation, training, and testing.

These data science steps allow the team to see what the data looks like, where it originates, and what it can predict. Next, the model operations life cycle, often managed by machine learning engineers, begins.

Model training. To train different ML models, the data scientist implements various algorithms with the prepared data. Next, to achieve the best performance from the ML model, the team conducts hyperparameter tuning on the implemented algorithms.

Model evaluation. The team evaluates the quality of the trained model on a holdout test set. This produces a set of metrics for assessing model quality as output.

Model validation. Next, the team confirms that the model’s predictive power exceeds a specific baseline, making it adequate for deployment.

Model serving. The team deploys the validated model to a target environment to serve predictions as part of a batch prediction system; as an embedded model for a mobile device or edge device; or to serve online predictions as web services or microservices with a REST API.

Model monitoring. The team monitors the predictive performance of the model to determine when to invoke a new iteration.

There is a direct correlation between the maturity of the ML process and the level of automation of the deployment steps. This reflects how quickly the team can train new models given new data or implementations.

Google recognizes three levels of MLOps, beginning with a manual process level which involves no automation and is the most common, leading to ML and CI/CD pipelines that are both automated. Here are the three levels:

MLOps level 0 reflects a manual process. At this level, the team may have a state-of-the-art ML model created by a data scientist, but the build and deployment process for ML models is completely manual.

MLOps level 1 reflects an automated machine learning pipeline framework that enables continuous testing (CT) of the ML model and continuous delivery of model prediction service. The team must add metadata management, pipeline triggers, and automated data and model validation steps to the pipeline to automate the process of retraining models in production using new data.

MLOps level 2 reflects a robust, fully automated CI/CD pipeline system that can deliver reliable, rapid updates on the pipelines in production. An automated CI/CD pipeline system enables data scientists to explore and implement new ideas around feature engineering, hyperparameters, and model architecture rapidly, and automatically create new pipeline components, and test and deploy them to the target environment.

Model Training in Focus

Batch training and real-time training are the two main approaches to model training, although one-off training is sometimes possible.

In one-off training, a model is simply trained ad-hoc by a data-scientist. The team then pushes the model to production until its performance declines enough that the data scientist must address the issues and refresh the model.

The most frequently used process for model training, batch training sees a machine learning algorithm is trained on the available data in a batch or batches. The model can be trained again if necessary once this data is modified or updated.

Real-time training involves improving a model’s predictive power continuously by updating the model’s parameters with new data.

Model Deployment Considerations

A trained model must then be deployed into production. Model deployment, also often called model serving, predicting, or model scoring, just refers to the process of using new data to create predicted values. (These phrases are context-dependent and there is nuance as to which term is best used in which situation.)

However, there are numerous important considerations for any MLOps team during model deployment.

Complexity. Model complexity is a critical factor affecting cost, storage, and related issues. Models such as logistic regression and linear regressions typically require less storage space and are fairly easy to apply. However, more complex models such as an ensemble decision tree or a neural network require more time to load into memory on cold start and more computing time generally, and will ultimately cost more.

Data sources and experimentation framework. There may be differences between the training and production data sources. Data used in training should be contextually similar to production data, but recalculating all values to ensure total calibration is generally impractical. Creating a framework for experimentation typically includes A/B testing the performance of different models, evaluating their performance, and conducting tracking sufficient to debug accurately.

Monitoring performance: Model drift. Once deployed into production, it is necessary to monitor how well the model is performing to ensure that it continues to provide utility to the business. Each aspect of performance has its own measurements and metrics that affect the ML model life cycle.

Model drift refers to the change in the model’s predictive power over time. Model drift answers the question, “How accurate is this model today, compared to when it was first deployed?” Data can change significantly in a short time in a system that acquires new data very regularly. This also changes the features for training the model.

For example, a network outage, labor shortage, change in pricing, or supply chain failure may have a serious impact on the churn rate for a retailer using a model. Predictions will not be accurate unless the model accounts for this new data.

Therefore, a process that tests the model’s accuracy by measuring the model’s performance against either new data or an important business performance metric is essential to the model operations life cycle. Some frequently used model accuracy measures are Average Precision (AP) and Area Under the Receiver Operating Characteristic Curve (AUROC).

If the model fails to meet a threshold for acceptable performance, the system initiates a new process to retrain the model, and then deploys the newly trained model. Various MLOps tools and tools developed specifically for machine learning lifecycle management track which configuration parameter set and model file are currently deployed in production. Most of these tools include some process for measuring model performance on new data and retraining on new data when a model falls outside the hash marks for performance.

Supervised learning models may require more time for model testing, because acquiring new labeled data can take time.

At times, the features that were selected during the original data science process lose relevance to the outcome being predicted because the input data has changed so much that simply retraining the model cannot improve performance. In these situations, the data scientist must revisit the entire process, and may need to add new sources of data or re-engineer the model entirely.

Finally, other measurements aren’t strictly related to accuracy, but are still applicable as model performance metrics. These may include skewed input data that cause predictions to be unfair, or algorithmic bias. Although these are more difficult to measure, in certain business contexts they are even more critical.

What is ModelOps?

ModelOps, short for model operations, is AI model operationalization. According to Gartner, ModelOps is “focused primarily on the governance and life cycle management of a wide range of operationalized artificial intelligence (AI) and decision models,” including machine learning models, enabling their retraining, retuning, or rebuilding. The result is a seamless flow within AI-based systems between AI model development, operationalization, and maintenance.

ModelOps is a holistic strategy for deploying models more rapidly and delivering predicted business value for Enterprise AI organizations based on moving models through the analytics life cycle iteratively and more quickly. ModelOps is rooted in both the DevOps and Data Ops approaches. But what DevOps and DataOps achieve with software and application development versus data analytics, respectively, ModelOps achieves in the realm of models. The ModelOps approach focuses on speeding up each phase of moving models from the lab through validation, testing, and deployment, while maintaining expected results. It also ensures peak performance by focusing on continuous model monitoring and retraining.

ModelOps is central to any enterprise AI strategy because it orchestrates all in-production model life cycles spanning the entire organization. Beginning with placing the model into production and continuing on to apply business and technical KPIs and other governance rules to evaluate the model and update it as needed, ModelOps enables business professionals to independently evaluate AI models in production.

Even more critically, ModelOps brings the business team into the conversation in a meaningful way by offering insight into model outcomes and performance in a way that is clear and transparent without explanation from machine learning engineers or data scientists. This drive towards transparency can assist in deploying AI at scale as well as fostering trust in enterprise AI.

How to Operationalize Machine Learning and Data Science Projects

In the realm of machine learning operationalization there are several common pain points businesses must solve, such as the often lengthy delay between the start of a data science project and its deployment. To tackle these pain points, many organizations adopt a four-step approach.

Building Phase

For a more seamless transition through the building phase, the data science team must establish a meaningful, ongoing conversation with their counterparts on the business intelligence team. Only with that collaborative input may they develop a systematic ML operationalization process.

Data scientists create analytics pipelines using commercial applications as well as languages such as R and Python. To boost the model’s predictive power and more accurately represent the business problem it attempts to solve, they engineer new features, build predictive models, and use innovative ML algorithms.

The conditions in real-time production environments must also shape the work of data scientists. Data scientists must consider the structure of data in production environments as they build predictive models, and ensure that the actual conditions of real-time production allow for rapid enough feature creation as they engage in new feature engineering.

Management Phase

To maximize the functionality of the operationalization cycle process, teams should manage the model life cycle from a central repository and machine learning automation platform. The repository should track the approval, versioning, provenance, testing, deployment, and replacement of models through their life cycle along with accuracy metrics, the metadata associated with model artifacts, and dependencies between data sets and models.

Deployment/Release Phase

The goal of deployment is to be able to test the model in real business conditions. This involves expressing a data science pipeline removed from its original environment where it was developed and deploying it in the target runtime environment. To do this, the pipeline must be expressed in a language and format that is appropriate for that environment and can be integrated into business applications and executed independently.

Monitoring/Activation Phase

After deployment, the model enters the monitoring or activation phase, when it operates under real-world business conditions and the team monitors it for its business impact and for the accuracy of its predictions. Ongoing monitoring, tuning, re-evaluation, and management of deployed models is critical, because the models must adjust to changing underlying data yet remain accurate.

For a successful monitoring phase, engage both analytical and nonanalytical personnel as stakeholders. Monitor and revalidate the value the ML model delivers to the business constantly. This process should account for various kinds of input, such as that from both human experts and expert-approved retraining champion-challenger loops.

What is the Typical Machine Learning Workflow?

Machine learning workflows vary by project and define the steps a team takes during a specific machine learning project. The phases of typical machine learning workflow management include collecting data, data pre-processing, constructing datasets, model training and refinement, evaluation, and deployment to production.

Collecting data

Gathering machine learning data is among the most impactful phases of any machine learning workflow. The quality of the data collected defines the potential accuracy and utility of the ML project during data collection.

The team must first identify data sources, and then collect data from each source to create a single dataset. This might involve downloading open source data sets, streaming data from IoT sensors, or building a data lake from various logs, files, or media with any number of machine learning workflow tools.

Data pre-processing

The team then subjects all collected data to pre-processing, including cleaning the data, verifying it, and formatting it into a dataset that is usable for the project at hand. This may be fairly straightforward in situations where the team is gathering data from a single source. However, when it is necessary to aggregate several data sources, the team must verify that data is equally reliable, that data formats match, and that they have removed any duplicative data.

Constructing datasets

Building datasets means breaking down the processed data into three datasets: the training dataset, the validation dataset, and the test dataset.

The training dataset uses parameters to define model classifications. The team uses this dataset to train the algorithm initially and teach it to process information. The team uses the validation dataset to estimate how accurate the model is and tune its parameters. The test dataset is designed to reveal any mistraining or problems in the model, and the team uses it to assess model performance and accuracy.

Training and refinement

With datasets in hand, the team can train the model. The team feeds the training dataset to the algorithm to teach it the appropriate features and parameters.

After training, the team can use the validation dataset to refine the model, which can include discarding or modifying variables and involves a process of tweaking the hyperparameters to reach an acceptable level of accuracy.

Evaluation

It is time to test the model using the test dataset once its accuracy is optimized and the team has selected an acceptable set of hyperparameters. The goal of testing is to verify that the model is using accurate features. Testing feedback can suggest a return to the training phase to adjust output settings or enhance accuracy, or signal that it’s time to deploy the model.

Best Practices for Defining the Machine Learning Project Workflow

There are several best practices to guide the process of defining the machine learning project workflow.

Define the project

To avoid adding redundancy to the process with your model, carefully define your ML project goals before starting. Consider several issues when defining the project:

What is the existing process that the model will replace? How does that process work, who performs it, what are its goals, and how is success defined for this process? Answering these questions clarifies the role the new model must play, what criteria it must meet or exceed, and what restrictions in implementation may exist.

What do you want to predict? Define this carefully to understand what data to collect and how to train the model. In this step, be as detailed as possible and quantify results to ensure measurable goals that a team can actually meet.

What are the data sources? Assess what data and in what volume the process collects and uses now, and how it collects that data. Determine what specific data points and types from those sources the team needs to form predictions.

A functional approach

Improving the accuracy or efficiency of the existing process is the goal of implementing machine learning workflows. There are two basic steps toward identifying a functional approach that can achieve this goal that you can repeat as needed:

Research and investigation. It is critical to research the implementation of similar ML projects before implementing an approach. It can save money, time, and effort to learn from the mistakes of others or selectively borrow successful strategies.

Testing and experimentation. It is important to test and experiment with an approach, whether you have created it from whole cloth or found an existing approach to try. This correlates with model training and testing.

Create a complete solution

Developing a machine learning workflow often results in a product that is more like a proof-of-concept. This proof ultimately must translate into a functional result and deployable solution that can meet the team’s end goals. To ensure this happens:

Use A/B testing to compare the existing process and the proposed model. Confirm whether the model is effective, predicts what you want it to predict, and whether it can add value to relevant users and teams.

Create a machine learning application programming interface (API) for model implementation so the model can communicate with services and data sources. If you will offer the model as a ML service, the accessibility provided by an API is especially important.

Create user-friendly documentation for the model including documentation of methods, code, and how to use it. Express to potential users of the model how they can leverage it, what kind of results to expect, and how to access those results, so the benefits are clear and the model itself is a more marketable product.

Automate ML

There are various tools, modules, and platforms for machine learning workflow automation, sometimes called AutoML. AutoML enables teams to perform some repetitive model development tasks more efficiently.

Right now it isn’t possible to automate all aspects of machine learning operations, but several aspects can be reliably automated:

- Hyperparameter optimization finds the optimal combination of pre-defined parameters using algorithms like random search, grid search, and Bayesian methods.

- Model selection determines which model is best suited to learn from your data by running the same dataset through multiple models with default hyperparameters.

- Feature selection tools choose features from pre-determined sets that are most relevant.

How Does Arrikto Support Machine Learning Model Lifecycle Management?

To operationalize digital initiatives and unlock new revenue streams, organizations have widely adopted DevOps continuous workflows and cloud native patterns to manage software that is being deployed at scale. However, while software may be eating the world, data is now defining market leadership. How efficiently an industry leader manages their data, models it, and leverages it into machine learning algorithms now determines the size of their ‘competitive moat’ in their industry.

This means it is more important than ever to treat data as code. Organizations need to apply the same DevOps principles used for software development to manage data across the ML lifecycle from continuous integration and continuous deployment (CI/CD) through lineage, versioning, packaging, mobility, and differential tracking; cloning and branching; intelligent merging; and distributed collaboration. Although we take these principles for granted in modern software development, we are in a nascent stage with them for machine learning and data management.

Kubeflow has become the preferred MLOps automation platform because it offers a production workflow that is automated, portable, reproducible, and secure. However, there are currently four gaps between the potential for MLOps and the reality of deploying Kubeflow at scale in production:

- Portability Gap: fragmented environments. A data scientist’s local environment operates very differently than a production cloud environment.

- Automation Gap: disjointed workflows. Data scientists aren’t DevOps or infrastructure experts, but today they are expected to create Docker containers, publish to repos, write DSL and DAG definitions for pipelines, write YAML files, etc.

- Reproducibility Gap: data versioning hell. ML is unique: both data and code constantly change, making debugging, compliance, and collaboration nearly impossible.

- Security Gap: data sprawl. Without a way to isolate and track users, data is copied everywhere and credentials leak, creating security and audit challenges.

Arrikto enables any company to realize the MLOps potential of Kubeflow by enabling data scientists to build and deploy models faster, more efficiently and securely.

Much like DevOps shifted deployment left to developers in the software development lifecycle, Arrikto shifts deployment left to data scientists in the machine learning lifecycle. Arrikto introduces a feature-rich version of MLOps Kubeflow built for enterprises that empowers data scientists and ML engineers to work in their native environment, with familiar tools to rapidly build, train, debug, and serve models on Kubernetes in any cloud.

Arrikto has developed three key technologies to create an end-to-end MLOps solution: MiniKF, Kale, and Rok:

MiniKF: one standardized, consistent environment, ground to cloud

- Kubeflow on GCP, your laptop, or on-premises infrastructure in minutes (AWS coming very soon).

- All-in-one, single-node, Kubeflow distribution

- Contains Minikube, Kubeflow, Kale, and Rok

- Supports simple to sophisticated e2e ML workflows

- Exact same experience and features with Arrikto’s, multi-node Enterprise Kubeflow offering

Kale: enable data scientists to easily generate production-ready pipelines for MLOps

- Automatically generate Kubeflow pipelines from ML code in any notebook

- Tag cells to define pipeline steps, hyperparameter tuning parameters, GPU usage, and metrics tracking

- Run the pipeline at the click of a button: auto-generate pipeline components and KFP DSL, automatically resolve dependencies, inject data objects to seed every step with the correctly versioned data, generate, and deploy pipeline

- Take data snapshots before and after every step for debugging, reproducibility, and collaboration using Rok

- Use the Kale SDK to do all the above straight from a code repository, by just decorating existing functions

Rok: debug and collaborate on model development even as underlying model/code/data changes

- Data versioning, packaging, and sharing across teams and clouds to enable reproducibility, provenance, and portability

- Roll-back models to prior snapshots for model debugging

- Roll-back pipeline steps to exact execution state for simpler and faster debugging

- Collaborate with other data scientists through a GitOps-style, publish/subscribe versioning workflow

Arrikto’s MLOps platform puts machine learning capabilities within reach for any company, helping them achieve faster model time to market, from months to minutes, higher-quality models, from rapid debugging and easy collaboration, and improved model governance: from fully reproducible pipelines.

Learn more about how Arrikto’s Enterprise Kubeflow solution and Rok data management platform support machine learning model lifecycle management.