What is Kubeflow?

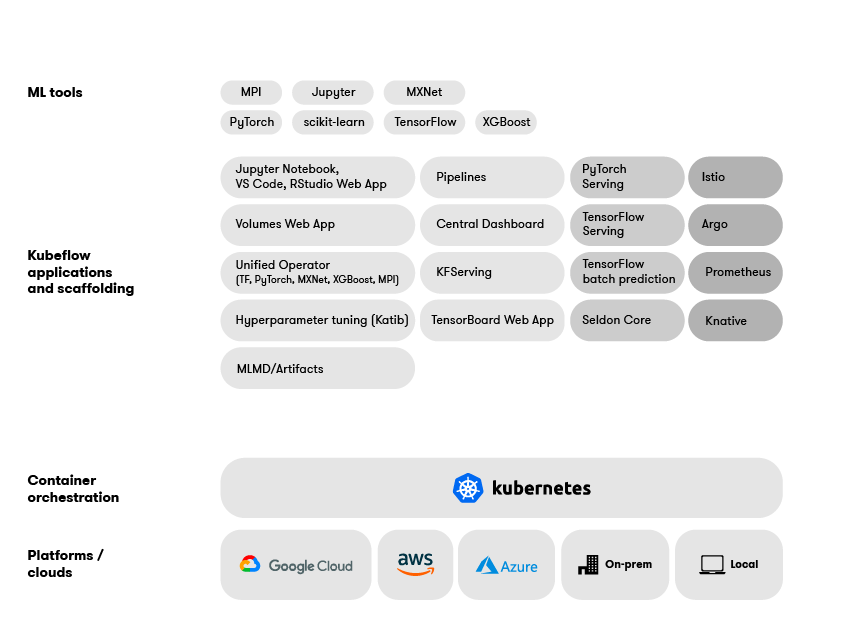

Originally developed by Google, Kubeflow is a complete open source MLOps toolkit. It includes integrated components for model development, training, multi-step pipelines, AutoML, serving, monitoring, artifact management, and experiment tracking.

Kubeflow is the open source MLOps standard

Kubeflow is supported by the biggest names in tech

Kubeflow has received over 1000 contributions from companies like Google, AWS, Microsoft, VMware, Red Hat, Bloomberg, Cisco, IBM and Intel.

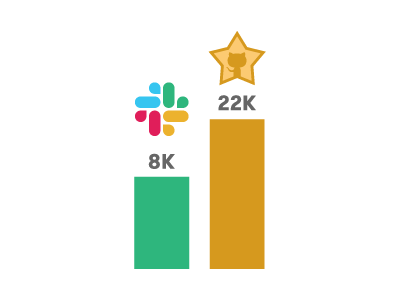

The Kubeflow community is growing

Launched in 2017 by Google, the Kubeflow project now boasts over 22,000 GitHub stars across all repos and almost 8,000 Slack members.

Kubeflow is a complete machine learning platform

Skip the integration nightmares. Kubeflow has everything you need for your production workflow including model development, training, AutoML, serving, monitoring and artifact management, built-in.

Arrikto makes Kubeflow, the same open source technology companies like Google, Shell, Twitter, Rakuten and more rely on to power their machine learning workflows, on public clouds, on-premise or as-a-service.

Learn more about Arrikto Enterprise Kubeflow, or request a private MLOps workshop.

Learn more about Arrikto Enterprise Kubeflow, or request a private MLOps workshop.

Fortune 100 companies succeed with Kubeflow

Companies like Shell rely on Kubeflow to power critical machine learning initiatives at-scale. Enterprises and fast growing startups alike rely on Kubeflow for MLOps. Learn more.

Kubeflow reduces costs, while accelerating the delivery of scalable models in production

Reduced Model Development Times

Bring models to production faster

Reduce the time it takes your data scientists to set up development environments, run experiments and tune models using AutoML. Kubeflow is a fully integrated, MLOps platform that supports popular IDEs like JupyterLab, RStudio and Visual Studio Code, plus AutoML, multi-step pipelines and serving, all-in-one.

Scalable By Design

From laptop to GPU-powered cluster

Stop spending days or weeks trying to figure out why things developed locally don’t work in production. Kubeflow runs on top of Kubernetes to guarantee portability and reproducibility, whether you develop locally or in the cloud.

Lower Costs

Enterprise Machine Learning Without the Big Price Tag

A production machine learning workflow means you can either build or buy point solutions to solve problems like model development, training, HPO, AutoML, pipelines, serving and metadata tracking. Either way, you’ll need to spend time and money to integrate them all. Or, choose a single open source MLOps platform like Kubeflow that already integrates many of the components you already use.

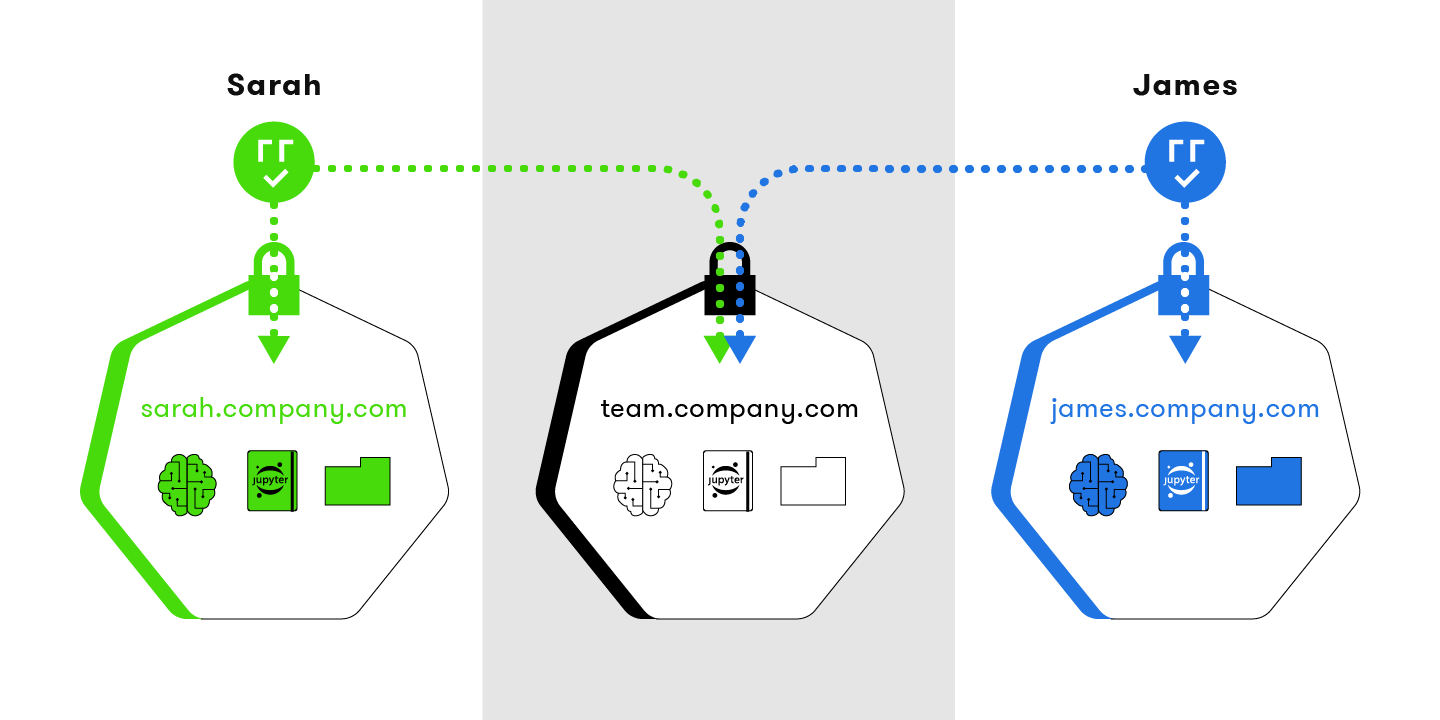

Streamlined Collaboration

No Matter Your Role, Kubeflow Has the Features You Need

Whatever software you build or buy to realize your machine learning workflows, data scientists are often asked to be DevOps experts and vice versa. Kubeflow is a platform that has all the capabilities data engineers, data scientists, ML engineers, DevOps, and SecOps need to run models in production. A common toolset that is tightly integrated means you don’t have to be an expert in a dozen technologies to unleash the power of machine learning at scale.

Arrikto is a Kubeflow community leader

Arrikto contributes code, leads development and release teams, organizes Meetups, sponsors open source contributors, and delivers free Kubeflow training and certification

Arrikto makes it easy to get started with Kubeflow

Arrikto’s Kubeflow as a Service enables data scientists to get free access to a complete MLOps platform in just minutes.

Arrikto leads the development of Kubeflow

Arrikto contributes code, while also helping lead development working groups and release management teams.

Arrikto leads the Kubeflow community

Arrikto organizes the biggest MLOps and Kubeflow Meetups with over 3,500 members, plus offers free instructor and on-demand Kubeflow training with over 5,000 students enrolled to date.

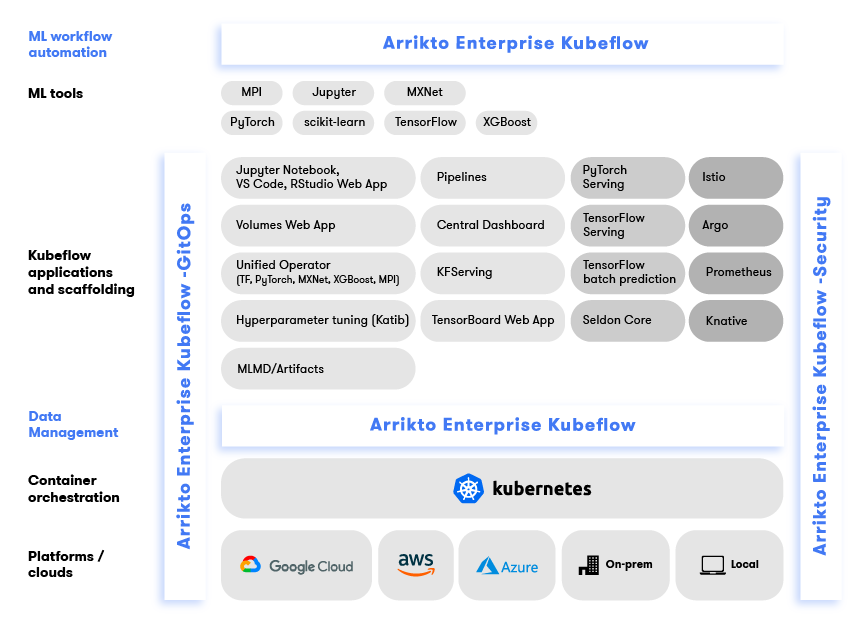

Arrikto’s Enterprise Kubeflow has all the features you need to run even the most complex machine learning workflows

Automation

Enable data scientists to easily orchestrate the whole workflow

Generate Kubeflow pipelines from machine learning code in any notebook with Arrikto’s Enterprise Kubeflow. Start by tagging cells in Jupyter Notebooks to define pipeline steps, hyperparameter tuning, GPU usage, and metrics tracking. With the click of a button, create pipeline components and Pipelines DSL, resolve dependencies, inject data objects into each step, deploy the data science pipeline, and serve the model. Or use the SDK with your preferred IDE.

Reproducibility

Roll back instantly to any machine learning workflow step for easy debugging and collaboration

With Arrikto’s Enterprise Kubeflow you can automatically snapshot notebooks, pipeline code and data at every step. Easily roll back to any workflow step at its exact execution state for debugging. Collaborate with other data scientists through a Git-like publish/subscribe versioning workflow.

Portability

One consistent machine learning environment from desktop to cloud.

Stop wasting time trying to figure why things work in Dev, but fail to scale in Prod. Arrikto’s Enterprise Kubeflow makes it easy to move models and data between environments, whether it be your laptop, a private data center or the cloud.

Enterprise Security

Isolate users and their data with RBAC and fine-grain authorization controls.

Arrikto’s Enterprise Kubeflow makes it easy to manage teams and user access using your existing identity provider. Isolate user data access within their own namespaces while enabling notebook and pipeline collaboration in shared namespaces. You can also integrate with your existing credentials management system and connect your external data sources to Kubeflow, securely.

Kubeflow vs Arrikto Enterprise Kubeflow

Kubeflow vs Arrikto Enterprise Kubeflow Feature Comparison

Deployment model

Core Components

Operationalize workflows on Kubernetes

Create and manage Jupyter Notebook servers

Create and manage RStudio and VS Code servers

Train your models using your favorite framework

Define workflows as pipelines of containerized steps

Orchestrate scalable pipeline runs

Run experiments comparing pipeline runs

Optimize models with hyperparameter tuning

Enable serverless inferencing using your favorite framework

Administer ML tools and workflows from a centralized dashboard

AUTOMATION

Create and delete volumes using a GUI

View and manage files inside PVCs

Ensure library consistency across environments

Iterate machine learning code without having to create new Docker images

Automate pipeline building

Perform hyperparameter tuning from within notebook

Manage cluster lifecycle using GitOps

REPRODUCIBILITY

Snapshot every pipeline step

Snapshot notebooks

Keep snapshot versions for compliance

Specify snapshot frequency

Replicate snapshots across Kubeflow clusters

PORTABILITY

Test identical machine learning code across training and production environments

Share notebooks and datasets across clusters

Share notebooks between users

Run pipelines across clusters and clouds

SECURITY

Integrate with any identity provider for secure access

Share namespaces across team

Manage credentials securely

Subscribe to and publish snapshot repos securely

Enable external services to use Kubeflow APIs securely

Kubernetes

Kubernetes

Ready for your machine learning initiatives to start delivering ROI?